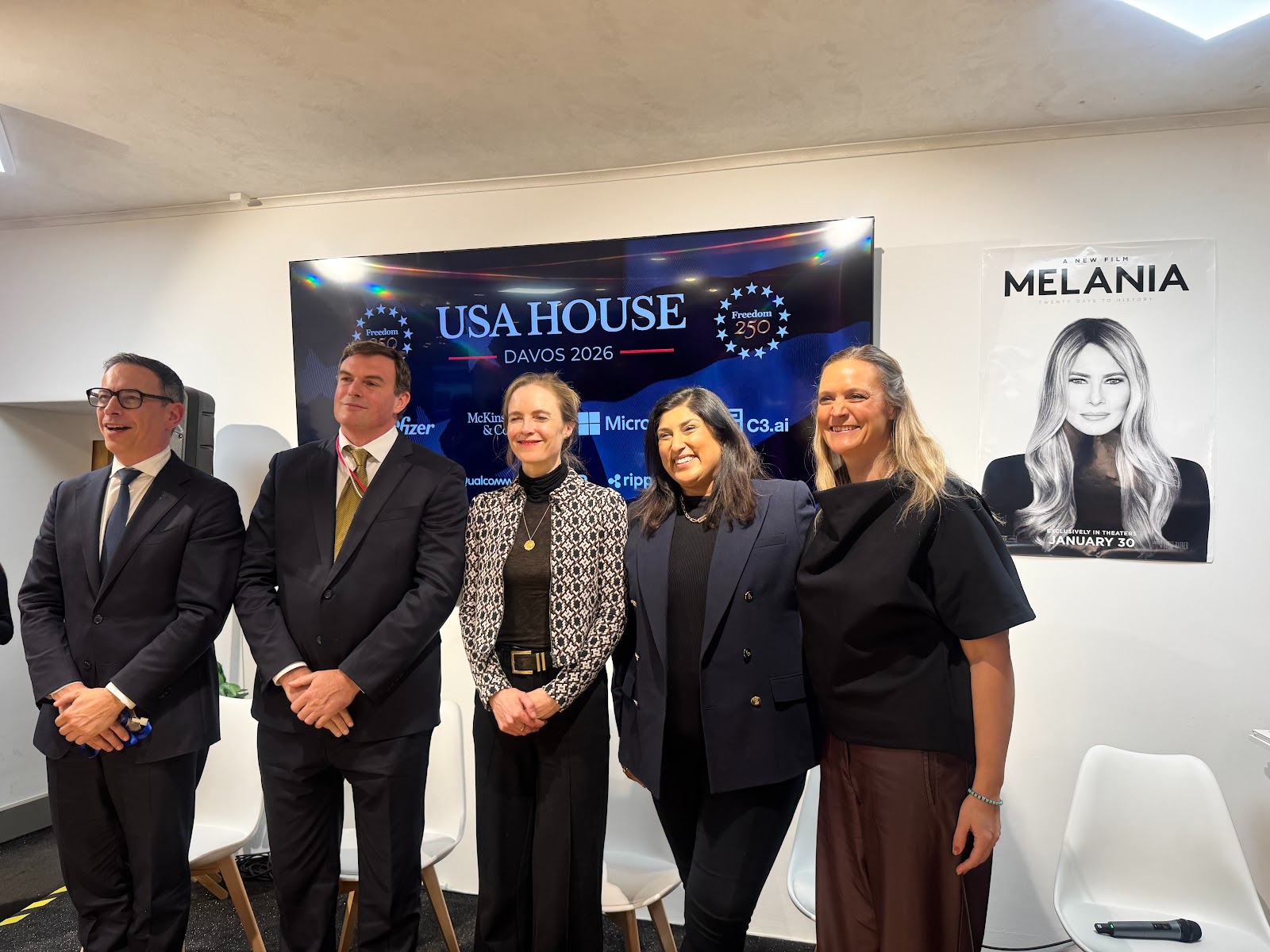

Singapore has done something that policy watchers and AI practitioners have been anticipating: it released the world's first comprehensive governance framework specifically designed for agentic AI systems. Announced by Minister Josephine Teo at the World Economic Forum in Davos on January 22, 2026, this framework addresses the unique challenges posed by AI systems that can reason, plan, and act autonomously.

For those of us deploying AI agents in production, this is not just another policy document. It provides concrete guidance on managing systems that operate with increasing independence. As agentic AI moves from experimental demos to enterprise workflows, the governance questions become unavoidable.

Why Agentic AI Needs Specific Governance

Traditional AI governance frameworks were designed for systems that analyze data and make recommendations. Agentic AI is fundamentally different. These systems can initiate tasks autonomously, modify databases, execute multi-step workflows, and coordinate with other agents without human intervention at each step.

The Infocomm Media Development Authority (IMDA), which developed the framework, identifies several risks specific to agentic systems:

- Unauthorized operations: Agents may take actions beyond their intended scope

- Cascading failures: Errors in one agent can propagate through interconnected systems

- Information disclosure: Agents with data access can inadvertently leak sensitive information

- Automation bias: Humans may over-trust agent decisions and reduce meaningful oversight

The framework addresses these by requiring organizations to think about agentic AI governance across four distinct dimensions rather than treating it as an extension of traditional AI risk management.

The Four Pillars of Agentic AI Governance

The IMDA framework structures governance around four core areas. Each addresses a different phase of the agentic AI lifecycle.

Pillar 1: Assessing and Bounding Risks Upfront

Before deploying an agentic system, organizations should evaluate what the framework calls the "autonomy surface area." This includes:

- The degree of agent autonomy relative to the task sensitivity

- Which external tools and systems the agent can access

- What data the agent can read or modify

- Potential cascading effects if the agent makes errors

The guidance recommends limiting agent autonomy and tool access to the minimum required for the task. Sandboxed environments and permission systems should constrain agent capabilities by default.

Pillar 2: Making Humans Meaningfully Accountable

This pillar addresses a core challenge: as AI systems become more autonomous, human accountability can become diffuse. The framework requires organizations to define clear roles across the agent lifecycle and establish explicit approval checkpoints.

The emphasis on "meaningful" accountability is important. It is not enough to have a human nominally responsible. The framework warns against automation bias, where supervisors rubber-stamp agent decisions without genuine oversight. Organizations need decision points where humans actively evaluate agent behavior, not just passively monitor outputs.

Pillar 3: Implementing Technical Controls

The framework specifies technical safeguards that should be implemented throughout the agent lifecycle:

- Sandboxing: Isolating agents to prevent unauthorized access to systems or data

- Safety testing: Validating tool usage, policy compliance, and workflow reliability before production deployment

- Continuous monitoring: Detecting anomalies in agent behavior during operation

- Privilege escalation protections: Preventing agents from gaining access beyond their authorized scope

Organizations are advised to pursue gradual rollout rather than full deployment, allowing real-world behavior to be observed and corrected before scaling.

Pillar 4: Enabling End-User Responsibility

The final pillar addresses the users who interact with agentic systems. Organizations should provide:

- Training on agent permissions and capabilities

- Transparency about what the agent can and cannot do

- Mechanisms to intervene or disable agents when necessary

This acknowledges that end-users play an active role in agentic AI governance. Users who understand an agent's limitations are less likely to over-rely on its outputs or miss situations where human judgment is needed.

Implications for Enterprise AI Deployments

Several aspects of this framework deserve attention from those building agentic systems.

Multi-agent coordination requires special consideration. Many production systems now involve multiple agents working in parallel, each specializing in different tasks. The framework notes that this increases efficiency but also compounds risks if errors cascade across agents. Organizations should evaluate how failures in one agent could affect the broader system.

Testing must go beyond traditional AI validation. The framework emphasizes testing "tool usage, policy compliance, and workflow reliability." This is broader than typical model evaluation. Agentic systems need validation of their entire operational context, including how they interact with external tools and handle edge cases.

Voluntary now, potentially mandatory later. The framework is currently non-binding. However, the IMDA explicitly designed it to inform future regulation. Organizations that align with these guidelines now will be better positioned if requirements become compulsory.

Regional Alignment Emerging

Singapore's framework does not exist in isolation. South Korea's AI Basic Act (2026) and Taiwan's AI Basic Act (2025) similarly address human oversight and data safeguards for autonomous systems. A shared regulatory language is emerging across Asia-Pacific that emphasizes human accountability and technical controls.

For organizations operating across multiple jurisdictions, this convergence simplifies compliance planning. Rather than managing disparate requirements, the emerging consensus around core principles like human oversight, risk assessment, and transparency creates a more predictable regulatory environment.

The UAE has not yet issued specific agentic AI governance guidance, but the principles in Singapore's framework align well with the Emirates' existing approach to AI regulation, which emphasizes responsible development while supporting innovation.

Practical Next Steps

For organizations deploying agentic AI, I recommend the following based on this framework:

- Audit current agent deployments for autonomy scope, tool access, and data permissions. Identify where boundaries could be tightened without losing functionality.

- Define accountability checkpoints for each agentic workflow. Document who reviews agent decisions and how.

- Implement continuous monitoring that goes beyond performance metrics to include behavioral anomaly detection.

- Document your governance approach. Even though the framework is voluntary, having clear governance documentation prepares you for future regulatory requirements and builds trust with enterprise customers.

Looking Forward

Singapore's framework represents the beginning of formal governance for agentic AI, not the end. As these systems grow more capable and more integrated into critical business processes, governance requirements will likely expand.

The organizations that engage with these frameworks early, rather than waiting for mandates, will shape how agentic AI governance evolves. For AI practitioners in the Gulf region and beyond, this framework provides a solid foundation for building responsible agentic systems that maintain human oversight without sacrificing the efficiency gains that make these systems valuable.