A team of researchers at UC Santa Barbara has introduced Group-Evolving Agents (GEA), a framework that fundamentally rethinks how AI agents can improve themselves. Instead of evolving in isolation, GEA treats a collective of agents as the unit of evolution, allowing them to share experiences and innovations across the group. The results are striking: on coding benchmarks, GEA matches or exceeds human-designed agent frameworks while adding zero additional inference cost at deployment.

Why This Matters for AI Agent Development

The current approach to building AI agents typically involves human experts painstakingly designing workflows, tools, and prompts. This is time-consuming, expensive, and often fails to generalize across different tasks. Self-evolving agents promise an alternative: systems that improve themselves autonomously through experience.

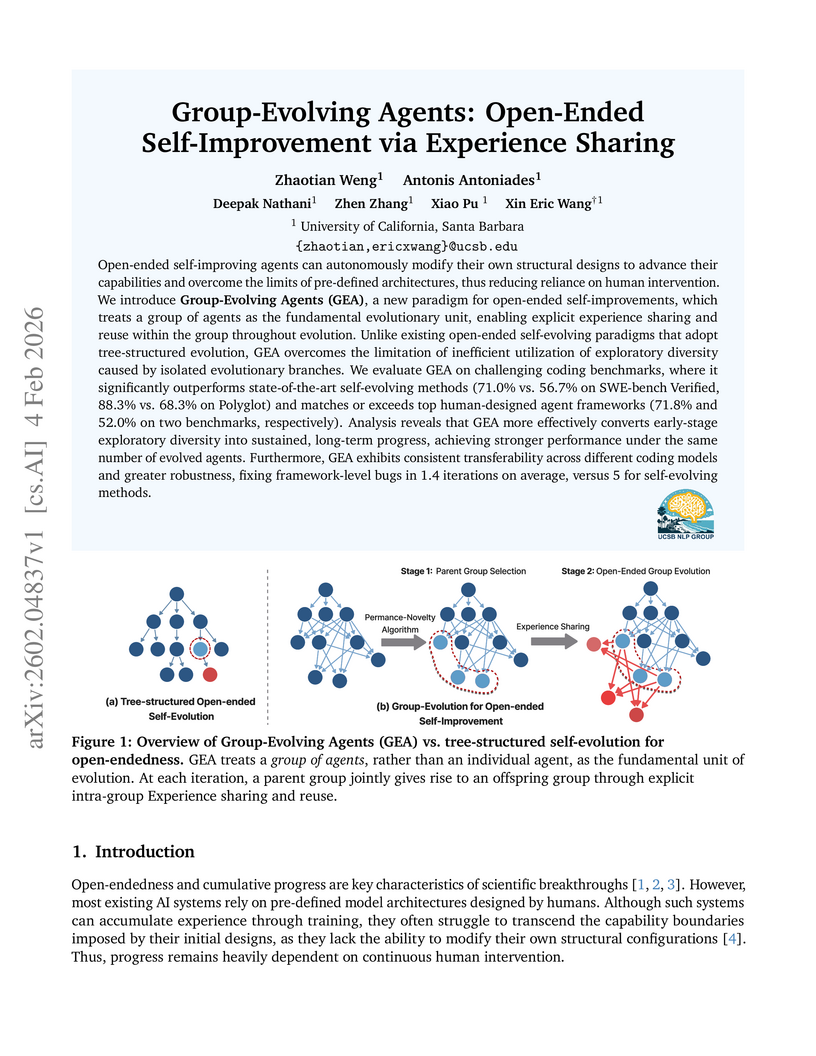

However, existing self-evolution approaches have a fundamental limitation. They use tree-structured evolution where each agent branch evolves in isolation. A breakthrough discovered by one branch cannot benefit agents in other branches. GEA solves this by treating the group itself as the evolutionary unit, enabling explicit experience sharing throughout the process.

How Group-Evolving Agents Work

GEA operates in two distinct phases. First, during the evolution phase, groups of agents collaborate and share their discoveries. Then, at deployment, you simply use the best evolved agent with no additional overhead.

The framework introduces several key innovations:

- Performance-Novelty Selection: GEA balances task competence with exploratory diversity when selecting parent groups. This prevents premature convergence on local optima while maintaining high performance.

- Shared Experience Pools: Unlike isolated evolutionary branches, all agents contribute their trajectories, code patches, and execution logs to a common pool. Each offspring agent can leverage insights from any ancestor.

- Collective Offspring Generation: Parent groups jointly produce child groups, enabling complementary adaptations that would be impossible in isolated evolution.

The researchers describe the core insight elegantly: "AI agents are not biological individuals; why should their evolution remain constrained by biological paradigms?"

Benchmark Results That Challenge Human Design

On SWE-bench Verified, a benchmark of real GitHub issues including bugs and feature requests, GEA achieved 71.0% success rate. This compares to 56.7% for baseline self-evolving methods and 71.8% for the best human-designed agent frameworks. On Polyglot, GEA reached 88.3% versus 52.0% for human-designed approaches.

Perhaps more impressive is GEA's robustness. When encountering framework bugs during evolution, GEA repaired them in an average of 1.4 iterations. Baseline methods required 5 iterations. This suggests that shared experience pools create more resilient systems.

The improvements also transfer across models. Agents evolved using GPT-series models maintain their gains when deployed with Claude-series models, and vice versa. This transferability indicates that GEA discovers general-purpose optimizations rather than model-specific tricks.

What GEA Discovers Autonomously

Examining what GEA evolves reveals sophisticated engineering decisions that typically require human expertise:

- Automated tool discovery: GEA agents autonomously develop and refine tools for code search, file manipulation, and test execution.

- Workflow optimization: The framework discovers effective sequences for approaching software engineering tasks, including when to explore versus exploit.

- Error recovery strategies: Evolved agents develop robust fallback mechanisms that handle edge cases gracefully.

The research shows that GEA's worst-performing top-5 agent (58.3%) still outperforms the baseline's best agent (56.7%). This population-level quality improvement suggests that group evolution creates a rising tide that lifts all agents.

Practical Implications for AI Practitioners

For those of us building AI systems in production, GEA offers several valuable lessons:

Zero inference cost matters. Many multi-agent approaches add significant latency and cost at deployment. GEA's two-phase design, where evolution happens offline and deployment uses a single optimized agent, makes it practical for real-world applications.

Experience sharing beats isolation. Whether you are using GEA directly or designing your own agent systems, enabling knowledge transfer between components can unlock performance gains that isolated optimization cannot achieve.

Autonomous improvement is becoming real. We are moving toward systems that can genuinely improve themselves without constant human intervention. GEA demonstrates this is not just theoretical: it achieves parity with expert-designed frameworks.

Looking Ahead

The GEA paper, authored by Zhaotian Weng, Antonis Antoniades, Deepak Nathani, Zhen Zhang, Xiao Pu, and Xin Eric Wang, represents an important step toward truly autonomous AI systems. As these techniques mature, I expect we will see fewer hand-crafted agent workflows and more systems that evolve their own approaches.

For organizations in the UAE and broader Middle East investing in AI capabilities, this research suggests that the next generation of AI tools may require less human design expertise and more infrastructure for agent evolution. The competitive advantage will shift from who can build the cleverest prompts to who can create the best environments for AI systems to improve themselves.

The paper is available on arXiv, and I expect we will see GEA-inspired approaches appearing in production AI systems within the next year.