The design-to-code handoff has been a source of friction in product development for as long as I can remember. Designers create pixel-perfect mockups, then engineers interpret those mockups into code, often losing fidelity along the way. Figma and OpenAI just announced an integration that aims to eliminate this friction entirely by connecting Codex directly to Figma through the Model Context Protocol.

What the Integration Actually Does

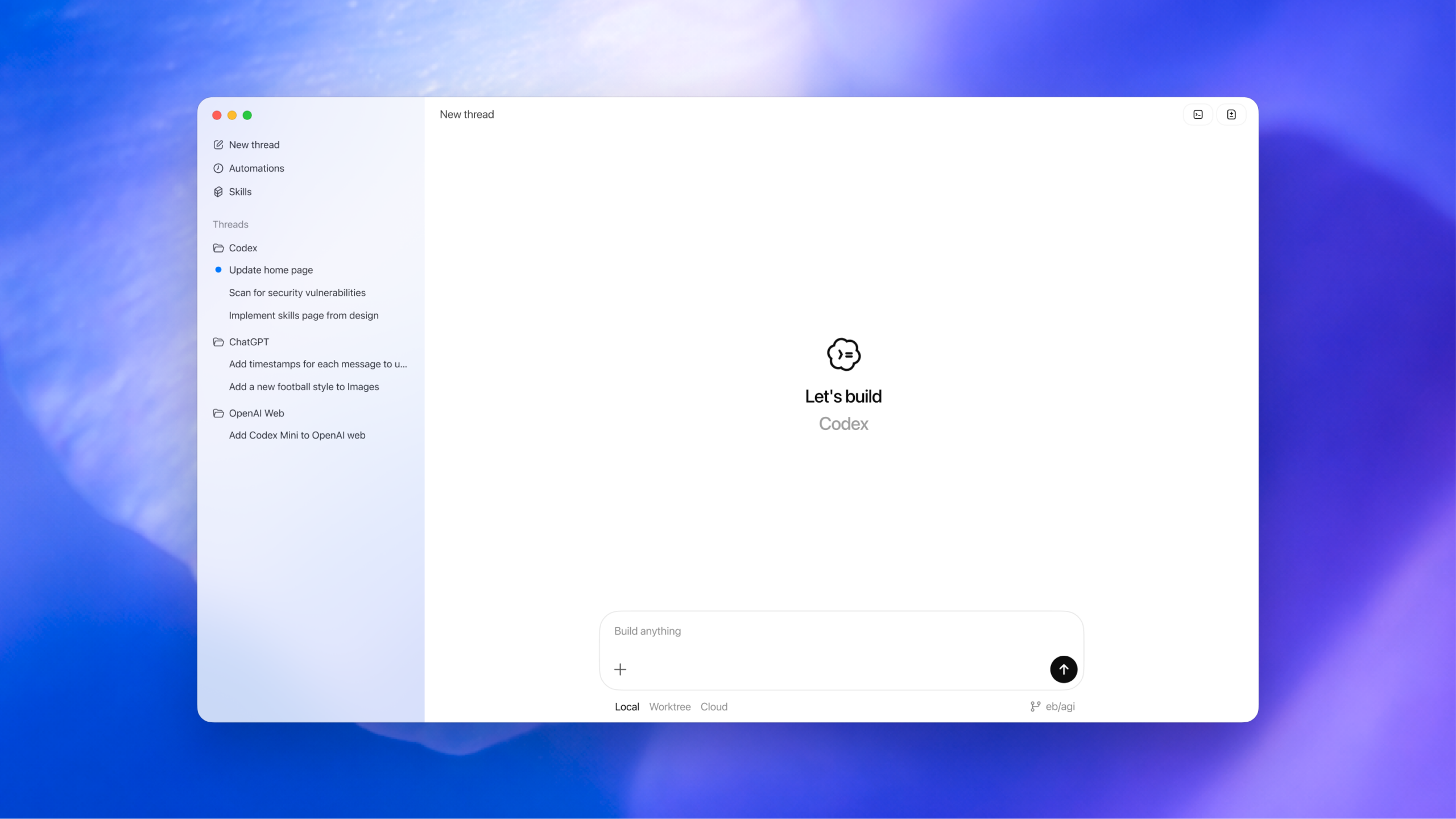

The new Figma MCP server creates a bidirectional connection between design and code. Unlike previous one-way workflows where you could export CSS snippets from Dev Mode or generate React components from Figma Make, this integration allows context to flow in both directions. You can generate editable Figma designs from code prompts in Codex, and you can convert existing Figma designs into working code with full awareness of your design system.

The system accesses code, Figma files, and design systems simultaneously. When you ask Codex to implement a Figma design, it understands your existing component library and matches the implementation to your established patterns. When you generate a design from Codex, the output lands as editable Figma components, not static images.

Figma's chief design officer described the goal as combining "the best of code with the creativity, collaboration, and craft" that design platforms offer. From the developer perspective, Codex's product lead noted that engineers can now iterate visually without leaving their flow, while designers work closer to real implementation.

How MCP Enables This

The Model Context Protocol is the technical foundation making this possible. Figma offers two deployment options: a remote MCP server that connects to their hosted endpoint, and a desktop MCP server that runs locally through the Figma app on macOS.

The core capability is the get_design_context tool, which extracts layouts, styles, component hierarchies, text content, SVG data, images, layer names, and annotations from Figma files. This structured information gives Codex the context it needs to generate accurate implementations rather than generic approximations.

A typical workflow might look like this: you open Codex and prompt it with "Help me implement this Figma design using my existing design system components." The MCP server retrieves the relevant context from your Figma file, and Codex generates code that actually uses your established patterns. No more reverse engineering design tokens or guessing at spacing values.

Why This Matters for Product Teams

For teams in the UAE and across the Middle East building digital products, this integration addresses a real pain point. The design-to-code handoff often introduces delays, miscommunication, and technical debt. Designers create something beautiful, engineers implement something functional, and the two do not always match.

The bidirectional flow means iteration can happen in either direction. If an engineer discovers that a design needs modification during implementation, they can make adjustments that reflect back to the design file. If a designer wants to see how a component renders with real data, they can work with generated code rather than static mockups.

This also has implications for smaller teams where roles overlap. Many startups do not have dedicated designers and engineers working in parallel. A single person might wear both hats, and eliminating the context switch between design and code tools saves meaningful time.

Current Limitations

It is worth noting what this integration does not solve. The design-to-code gap exists not just because of tooling limitations, but because design and implementation are fundamentally different disciplines. A Figma mockup represents visual intent, while production code must handle edge cases, accessibility requirements, responsive behavior, and state management.

The MCP integration excels at extracting visual context, but complex application logic still requires human judgment. If your Figma design shows a form, the generated code will render that form, but business validation rules and API integration remain engineering work.

The integration currently runs on the Codex desktop app for macOS. Teams using Windows or Linux, or those preferring browser-based workflows, will need to wait for broader platform support.

The Broader Trend

Figma announced a similar partnership with Anthropic for Claude Code integration just a week before this OpenAI announcement. The company is clearly positioning itself as a neutral platform that connects to multiple AI coding assistants rather than betting on a single provider.

This mirrors a pattern we are seeing across the industry. Rather than building proprietary AI capabilities, platforms are exposing their functionality through protocols like MCP that allow multiple AI systems to connect. The winner is the user who gets to choose their preferred AI assistant while still accessing full platform capabilities.

OpenAI reports that over one million people use Codex weekly, with usage increasing more than 400 percent since the start of the year. Figma was among the first partners to launch a ChatGPT app back in October 2025, so this deeper integration builds on an established relationship.

Looking Ahead

The Figma and OpenAI Codex integration represents a meaningful step toward closing the design-to-code gap. For practitioners building products, especially those working in lean teams or moving quickly on MVPs, the ability to move fluidly between design and implementation removes a persistent source of friction.

Whether this integration delivers on its promise will depend on how well the extracted design context translates to production-quality code. Early workflows will likely require iteration and refinement. But the architectural foundation, bidirectional context sharing through an open protocol, points in a direction that benefits the entire product development workflow.

I will be testing this integration on upcoming projects and sharing observations on what works and what still needs improvement. For now, the direction is clear: AI is not replacing designers or developers, but it is removing the translation layer between them.