Yesterday, Anthropic made one of the most consequential announcements in AI history: they revealed Claude Mythos Preview, a frontier model so capable at finding software vulnerabilities that they deemed it too dangerous to release publicly. Instead, they launched Project Glasswing, a $100 million cybersecurity initiative that gives select organizations early access to the model for defensive purposes only.

What Claude Mythos Can Do

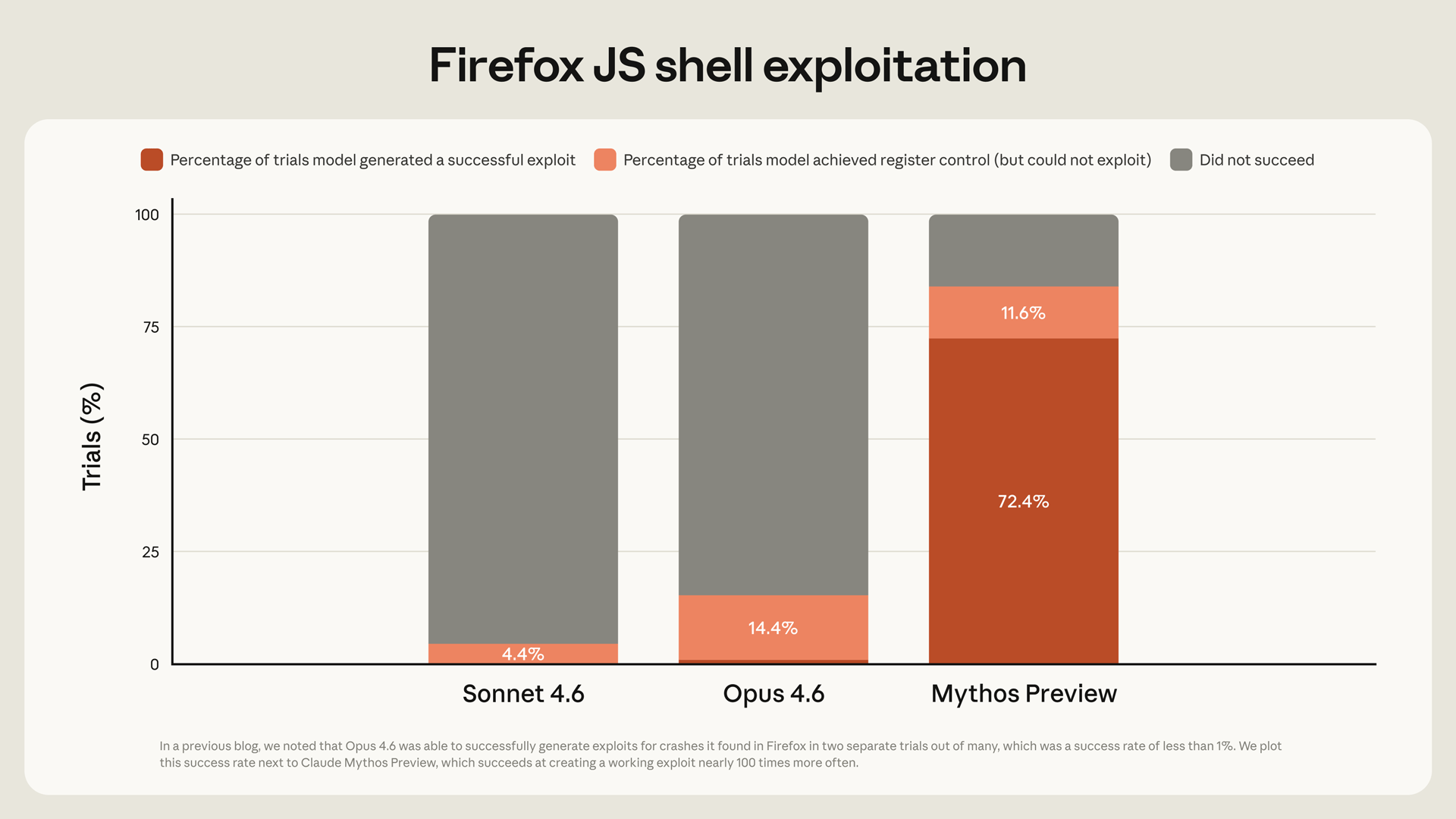

Claude Mythos Preview is a general purpose frontier model, but its cybersecurity capabilities set it apart. According to Anthropic, the model has reached a level of coding capability where it can surpass all but the most skilled humans at finding and exploiting software vulnerabilities.

Over the past few weeks, Anthropic used Claude Mythos Preview to identify thousands of zero-day vulnerabilities across every major operating system and every major web browser, along with a range of other critical software. These are flaws previously unknown to developers, the kind that attackers prize most highly.

Several of these vulnerabilities had existed undetected for years. The oldest one discovered? A 27-year-old bug in OpenBSD, a system renowned for its security focus. That a single AI model could find something that eluded human security researchers for nearly three decades tells us something profound about where AI capabilities are heading.

Project Glasswing: Controlled Disclosure

Rather than releasing Claude Mythos publicly (or keeping it entirely secret), Anthropic took a middle path. Project Glasswing allows a carefully selected group of organizations to use Mythos Preview exclusively for defensive security work.

The founding members include some of the biggest names in tech: Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, Microsoft, and Nvidia. Additionally, approximately 40 other organizations responsible for building or maintaining critical software infrastructure have gained access to scan and secure their systems.

This approach represents a new paradigm for responsible AI deployment. Anthropic acknowledges that capabilities enabling defensive security work can also be weaponized by attackers. By restricting access while still enabling defenders, they are trying to give the good actors a head start.

Why Anthropic Is Holding Back

Anthropic explicitly stated they do not plan to make Claude Mythos Preview generally available. This is a significant departure from the typical AI lab playbook of racing to release new capabilities.

Their reasoning is straightforward: if attackers gained access to a model this capable at finding exploits, the fallout for economies, public safety, and national security could be severe. Anthropic has already warned government officials about the increased likelihood of sophisticated cyberattacks as AI capabilities improve.

However, they also acknowledge an uncomfortable truth: given the rate of AI progress, such capabilities will not remain limited for long. Other labs are making rapid advances. The window for defenders to prepare is measured in months, not years.

Implications for the Middle East

For those of us working in the UAE and broader Middle East region, Project Glasswing signals both opportunities and challenges. The region has invested heavily in digital transformation and smart city initiatives. Dubai, Abu Dhabi, Riyadh, and other major hubs are increasingly dependent on software infrastructure that could contain these same vulnerabilities.

Several practical considerations emerge from this announcement:

- Critical infrastructure audits: Organizations managing essential services should prioritize comprehensive security assessments, even before tools like Claude Mythos become more widely available.

- Talent development: The demand for cybersecurity professionals who understand AI capabilities will surge. Regional universities and training programs should accelerate their curricula.

- Vendor relationships: Companies using software from the Glasswing participants will benefit indirectly as those vendors patch newly discovered vulnerabilities.

- Regulatory frameworks: Governments may need to update cybersecurity regulations to account for AI-powered vulnerability discovery, both for defense and potential misuse.

The Broader AI Safety Question

Project Glasswing represents one of the first instances where a major AI lab has voluntarily restricted access to a capability because of its dual-use potential. This is not about the model being "too intelligent" in some abstract sense. It is about a specific, measurable capability: finding and exploiting software bugs faster than human defenders can patch them.

This raises important questions for the AI industry. How should labs handle capabilities that could be weaponized? Who decides what is "too dangerous" to release? How do we balance the defensive benefits against the risks of proliferation?

Anthropic's approach is not perfect. Restricting access to large corporations still concentrates power. Smaller organizations and open source projects may remain vulnerable while waiting for patches to trickle down. But it represents a genuine attempt to navigate difficult tradeoffs rather than simply racing to release.

What Comes Next

Anthropic has indicated that they eventually aim to deploy Mythos-class models more broadly once new safeguards are implemented. The question is whether the defensive community can build adequate protections before similar capabilities emerge from other sources.

For AI practitioners and security professionals alike, this announcement marks a turning point. The theoretical concern about AI-powered cyberattacks has become concrete. The response will shape not just cybersecurity but the broader trajectory of how we deploy advanced AI systems.

I will be watching closely as Project Glasswing participants begin disclosing the vulnerabilities they find. The next few months could see an unprecedented wave of patches across critical software. Whether defenders can stay ahead of attackers remains the central question of our AI-enabled security landscape.