The boundary between human and machine capabilities in research has shifted again. Sakana AI's AI Scientist v2 just achieved something that seemed years away: producing a fully AI-generated scientific paper that passed peer review at an ICLR workshop. This is not a drafting assistant or a co-pilot. This is an end-to-end system that formulates hypotheses, runs experiments, analyzes results, and writes complete manuscripts autonomously.

What Makes AI Scientist v2 Different

The original AI Scientist (v1) required human-authored code templates to function. Version 2 eliminates this dependency entirely. The system now generalizes across diverse machine learning domains without needing researchers to set up scaffolding for each new area.

The core innovation is a progressive agentic tree search methodology. Rather than following a linear path from idea to paper, the system branches into multiple parallel experiments. An experiment manager agent guides this process, selecting the most promising branches, iterating on them, and backtracking when paths lead to dead ends.

This approach mirrors how productive research labs actually work. You do not commit to a single hypothesis and follow it to completion. You explore multiple directions, let data guide your choices, and pivot when necessary.

The Peer Review Milestone

One of the papers generated by AI Scientist v2 was submitted to the ICLR 2025 "I Can't Believe It's Not Better" (ICBINB) workshop. The paper achieved an average reviewer score of 6.33, exceeding the average human acceptance threshold and outperforming 55% of human-authored submissions at the same venue.

This matters because peer review is designed to catch exactly the kinds of errors you would expect from automated systems: methodological gaps, overstated claims, missing context, and technical inaccuracies. The fact that reviewers (who did not know the paper was AI-generated) found it acceptable speaks to the system's capability.

The work was subsequently published in Nature, validating the scientific significance of the approach itself.

How the System Works

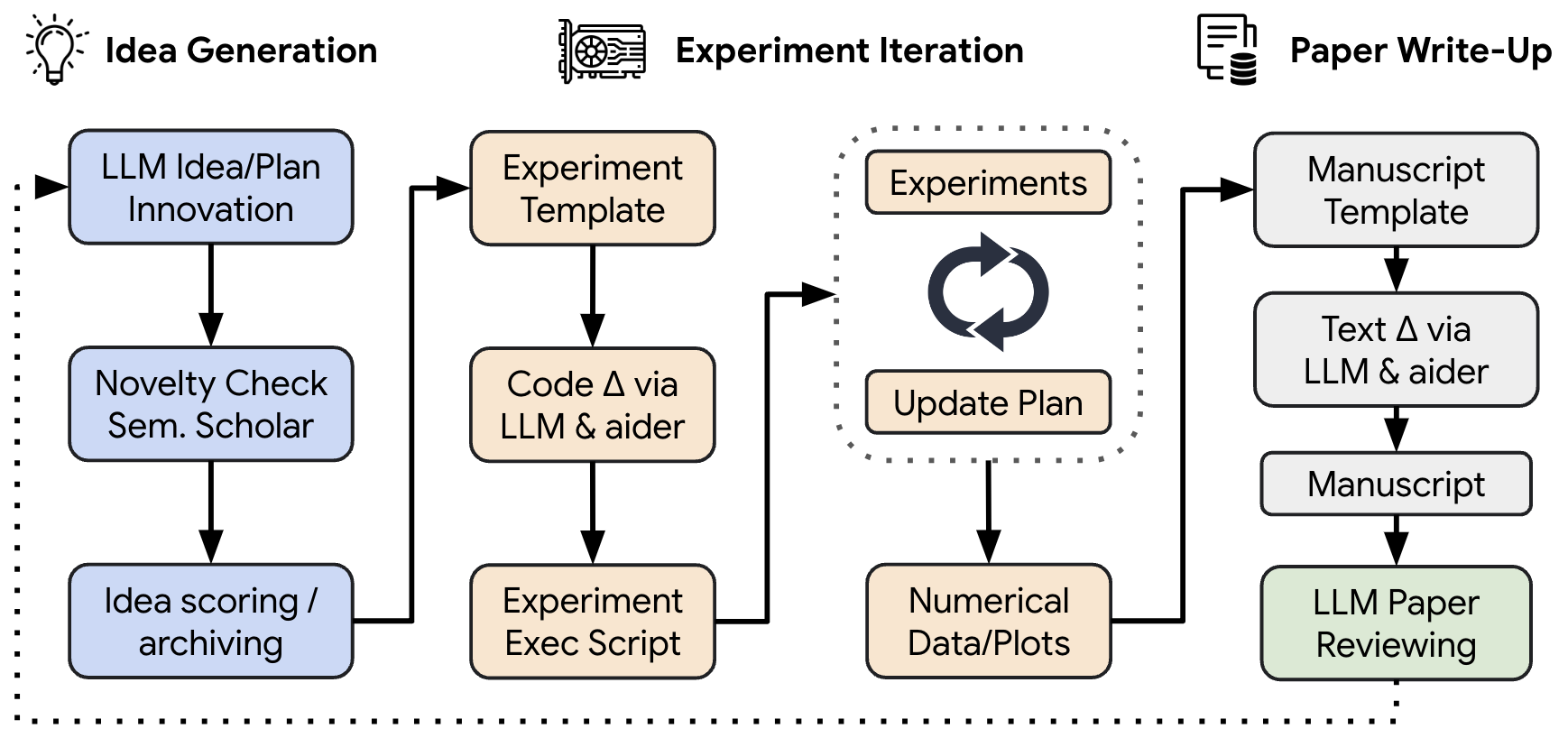

AI Scientist v2 operates through several integrated components:

Idea Generation Agent: Brainstorms research questions and checks existing literature to ensure novelty. This prevents the system from rediscovering known results.

Experiment Manager: Orchestrates multiple experiments using code generation, runs them in sandboxed environments, and analyzes results. This is where the tree search happens.

Paper Writing Module: Composes complete LaTeX manuscripts with proper formatting, citations, and figures.

Vision-Language Model Feedback Loop: A new addition in v2 that iteratively refines figures for both content accuracy and visual clarity. The system essentially reviews its own visualizations.

Automated Reviewer: Acts as an Area Chair, synthesizing five independent reviews following official NeurIPS guidelines. This component achieved 69% balanced accuracy, which is comparable to human reviewers and actually exceeds inter-human agreement rates from the NeurIPS 2021 consistency study.

Implications for Research Practice

I see three immediate implications for researchers and institutions in the Gulf and Middle East:

Acceleration of literature synthesis: Before you can do novel research, you need to understand what already exists. AI Scientist v2's literature search and hypothesis generation could compress months of reading into hours, helping researchers at newer institutions compete with established labs.

Resource multiplication: Running parallel experiments computationally is cheaper than hiring additional researchers. Teams with limited headcount can explore more ideas simultaneously.

Quality benchmarking: The automated reviewer component could help researchers improve their own papers before submission, providing feedback that approximates the review process.

However, the creators at Sakana AI acknowledge clear limitations. The system still struggles with "deep methodological rigor" and occasionally generates inaccurate citations (hallucinations). It works best on computational experiments where results can be verified automatically. Wet lab work, fieldwork, and social science research remain beyond its scope for now.

The Scaling Observation

Perhaps the most consequential finding from the research is this: as underlying foundation models improve, the quality of generated papers increases correspondingly. This suggests we are not looking at a one-time achievement but at a trajectory. Better base models will yield better science.

This has implications for AI investment strategy. Organizations funding foundation model development are indirectly funding automated research capabilities. The compound effects could be significant.

Looking Forward

The AI Scientist v2 code is open-source on GitHub, which means researchers worldwide can build on it. I expect we will see domain-specific adaptations: systems fine-tuned for materials science, drug discovery, or climate modeling.

For practitioners, the immediate takeaway is not that human researchers are obsolete. The system cannot replace scientific intuition, ethical judgment, or the kind of cross-domain creativity that leads to paradigm shifts. But it can handle the mechanical aspects of research at a scale and speed that was previously impossible.

The question for research institutions is no longer whether to engage with these tools, but how to integrate them responsibly. That includes watermarking AI-generated content (as the Sakana team recommends), maintaining human oversight on publication decisions, and developing new norms for attribution when AI contributes substantially to research outputs.

We are entering an era where the bottleneck on scientific progress may shift from the speed of experiments to the speed of ideas. That is a transition worth watching closely.